Why numbers don’t mean much when it comes to your behaviours!

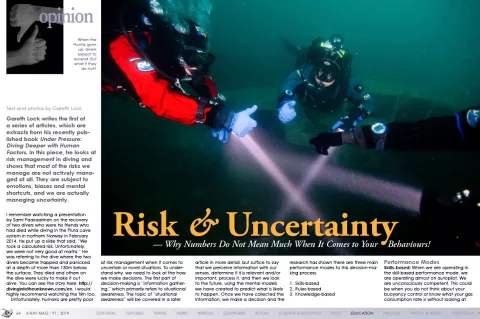

Gareth Lock writes the first of a series of articles, which are extracts from his recently published book <i>Under Pressure: Diving Deeper with Human Factors</i>. In this piece, he looks at risk management in diving and shows that most of the risks we manage are not actively managed at all. They are subject to emotions, biases and mental shortcuts, and we are actually managing uncertainty.

Contributed by

Factfile

Gareth Lock is a diver, trainer and researcher based in the United Kingdom, who has a passion for improving dive safety by teaching and educating divers about the role that human factors play in diving—both successes and failures.

He runs training programmes across the globe and via an online portal. In 2018, his online programme won an award for innovation in diving.

You can find out more at: Thehumandiver.com.

I remember watching a presentation by Sami Paakaarinen on the recovery of two divers who were his friends who had died while diving in the Plura cave system in northern Norway in February 2014. He put up a slide that said, “We took a calculated risk. Unfortunately, we were not very good at maths.” He was referring to the dive where the two divers became trapped and panicked at a depth of more than 130m below the surface. They died and others on the dive were lucky to make it out alive. You can see the story here: http://divingintotheunknown.com/en. I would highly recommend watching the film too.

Unfortunately, humans are pretty poor at risk management when it comes to uncertain or novel situations. To understand why, we need to look at the how we make decisions. The first part of decision-making is “information gathering,” which primarily refers to situational awareness. The topic of “situational awareness” will be covered in a later article in more detail, but suffice to say that we perceive information with our senses, determine if it is relevant and/or important, process it, and then we look to the future, using the mental models we have created to predict what is likely to happen. Once we have collected the information, we make a decision and the research has shown there are three main performance modes to this decision-making process.

1. Skills-based

2. Rules-based

3. Knowledge-based

Performance Modes

Skill-based: When we are operating in the skill-based performance mode, we are operating almost on autopilot. We are unconsciously competent. This could be when you do not think about your buoyancy control or know what your gas consumption rate is without looking at your gauge. Errors happen here when we are distracted, and our autopilot jumps too far into the future and we miss steps.

Rules-based: When we are operating in the rule-based performance mode, we pattern match against previous experiences and training as well as what we have read. We pick up cues and clues, match them against previous patterns of life or expectation and make a decision based on this pattern. Errors happen here when we apply the wrong rule because we have misinterpreted the information (cues/clues), which leads to the wrong pattern being matched.

An example for this might be spending a week cave diving in large cylinders and getting used to the consumption rates at certain depths, and then spending a few days ocean diving with much smaller cylinders and at greater depths. The diver checks their gauge at the intervals they were used to but does not “notice” the consumption rate is actually much higher. As a consequence, they run out of gas on a 30m dive!

The more practiced we become, the more patterns we have to match, and the less we are actively directing our attention to make decisions. We often use the frame of reference: “It looks the same, therefore it is the same, and the last time we did it, it was ok.” This is human nature—we are efficient beings!

Knowledge-based: The final mode is when we are operating in a knowledge-based performance mode, and this is where we start to encounter major issues when it comes to the reliability of the decision-making. The reason being that we are now trying to make a best fit, using mental shortcuts, pattern-matching and emotional drivers/biases. At this point we are not actively thinking through the problem. We are not logical.

Letting go of the line in zero visibility

An example from the book relates to Steve Bogaerts’ exit from a cave survey where he had transited through a zero-visibility section of the cave, using line touch contact. However, on the way back, as he followed the same line out, the line disappeared into the silt. He kept following it until his shoulder was touching the floor of the cave and it kept going deeper. He returned to a point a little back into the cave, dropped his scooter and markers on the line and reached back for his safety spools. They were not there, having been dropped somewhere in the cave without him realising it, as he was surveying the cave.

He decided to let go of the line and swim in a straight line where he thought the line was. Unfortunately, he hit the wall of the cave without finding the line. He carefully turned 180 degrees and swam back. He found the scooter and marker. He set off again, this time in a slightly different direction and found the exit line. As he was swimming out, he thought to himself: “Why didn’t I swim back into the cave, cut some of the permanent line, wrap that around an empty survey reel and use that as a safety spool?”

Unless we sit down and review our decision-making processes in detail, we are likely to be subject to outcome bias. For example, if we end up with a positive outcome, we judge that as a success, because we have not looked at how we got to the decision. We often judge ourselves or others more harshly if the same decision-making process ends up with an adverse outcome.

The problem with good outcomes, especially on the first attempt at something, is that you think that was the only way of solving the problem because you succeeded. Unfortunately, the error rates for the knowledge-based performance mode is in the order of 1:2 to 1:10. Not great odds for when you are dealing with potential life-threatening situations. Think about Steve’s incident and how it might have been reviewed if he had not made it out alive to tell his tale? What about events you have had where you were close to the line and now look at things differently.

Ditch the irrelevant—make stuff up!

As we operate within these performance modes, we have developed a great way of dealing with the vast amount of information we have available to us and the fact we cannot process it all—we ditch what we do not think is relevant and/or important and we fill the gaps with what we think is happening or will happen with previous experiences, and we just make stuff up!! This is why risk management is not very good in novel situations, we do not know what was important and/or relevant until after the event. After the event, we can join the dots, working backwards in time and identifying what we perceive to be the causal factor.

When it happens to others, we often ask: “How could they not see that this was going to happen? It was obvious that that was going to be the outcome.” If it was that obvious, i.e. with 100 percent certainty it was going to happen, then surely the diver would have done something about it to prevent it from happening. This brings me to one of my favourite quotes when it comes to risk and uncertainty.

“All accidents could be prevented, but only if we had the ability to predict with 100 percent certainty what the immediate future would hold. We can’t do that, so we have to take a gamble on the few probable futures against all the billions of possible futures.” — Duncan MacKillop

Takeaways

We are all running little prediction engines in our head. We are predicting what is going to happen in the future. These engines are fuelled by experiences, knowledge and training. The more emotive the memory, the more likely it will be used to predict the future, even if it is not relevant!

For example, in the United States, there are approximately 300 to 350 general aviation fatalities every year (light aircraft, not commercial carriers). These happen in ones and twos, and rarely make the media headlines. But what if three regional jets crashed every year… do you think the perception of air travel would change?

The same applies to diving. In the United Kingdom, the risk of a diving fatality is approximately 1 in 200,000 dives. This is a very small number. However, you cannot be a fraction of dead when it happens to you or your buddy.

We use numerous biases to help us make decisions when facing uncertainty. Most of the time, they are fine; the challenge is learning to recognise when critical decisions are being faced, and we need to apply more logic and slow down. The numbers game will catch you up at some point.

High-reliability organisations in high-risk sectors like aviation, oil and gas, nuclear and healthcare all have a chronic unease towards failure. They believe that something will go wrong, they just do not know when.

Take the same attitude to your diving: Service your gear; keep your skills to the level where you do not have to think about executing them—especially emergency or contingency skills; look for the failure points and where errors will trip you up; try not to use the mindset, “It worked okay last time;” and finally, when you have finished a dive or trip, ask yourself the question, “What was the greatest risk we took on that dive?” and address it before the next dive. ■