Diving is not without risk—there is always a chance of death. There is always a latent or potential lethality within the “system”—where system is defined as the equipment, people and the physical, social or cultural environment. We cannot make diving 100 percent safe despite what anyone tells you. We can make things safer, but we cannot make diving safe.

Contributed by

Factfile

Gareth Lock is a diver, trainer and researcher based in the United Kingdom, who has a passion for improving dive safety by teaching and educating divers about the role that human factors play in diving—both successes and failures.

He runs training programmes across the globe and via an online portal. In 2018, his online programme won an award for innovation in diving.

You can find out more at: Thehumandiver.com.

Most of the time, it is the diver’s skills, knowledge and attitude that prevents those risks from being materialised and an injury or fatality from happening. However, when errors happen, the latent or hidden lethality within the system is exposed. Sometimes, there is some luck involved in preventing a fatality or serious injury. However, luck should never be considered part of the plan for anything other than those genuine expeditions that are really pushing the boundaries. An example of this would be the Thai Cave Rescue in 2018, where despite significant technical and non-technical skills as well as equipment or resource being present, an element of luck was needed to get the 12 junior football players and their coach out from the flooded cave system.

Before I started teaching divers about human factors, I had a career as a military aviator. I was a navigator on C-130 Hercules transport aircraft with the Royal Air Force, teaching crews night-vision goggle flying at 250ft above ground or dropping troops from 25,000ft in HALO/HAHO missions, as well as standard strategic airlift missions. The lethality of the operating environment is quite clear, even when undertaking training missions. Aircraft do not just stay up in the sky when things go wrong, they have to be designed to cope with multiple failures, even when those failures are part of critical systems. To achieve this, it required our pilots, navigators, flight engineers and loadmasters to manage the operating environment so that there was capacity within the system to fail safely by predicting, trapping and mitigating errors which would appear. They did this via two interdependent sets of skills:

Technical skills. Flying the aircraft, reading gauges, inputting data into systems, preparing weapon systems, managing troops in the back of the aircraft.

Non-technical skills. This comes under the heading of Crew Resource Management (CRM), which includes decision-making, situational awareness, communications, leadership, teamwork, understanding of stress and fatigue and facilitating a Just Culture.

Non-Technical Skills/Crew Resource Management

CRM training came about because of numerous high-profile accidents where there was nothing technically wrong with the aircraft, or nothing which was not “detectable and fixable” with the aircraft—for example, the aircraft running out of fuel because the crew was busy trying to track down a fault in the landing gear of United Airlines Flight 173 in 1978.

The accident analysis of these incidents showed that in the majority of aviation accidents, it was down to failures in decision-making, leadership, teamwork and communications rather than something technically wrong, which could not be fixed with the aircraft, that lead to the accident happening. Since CRM has been brought in, the ability to predict, trap and mitigate the errors, which expose the lethality in the system, has led to improved safety in air operations. This applies to both civilian and military aviation, and across the globe. Those same non-technical skills have been ported into the healthcare domain (e.g. NOTECHS, Team STEPPS and ANTS) and the oil and gas sector (e.g. WOCRM), and the research from all domains has shown that improvements in performance and safety have been realised once a programme has been widely deployed from training to operations across organisations.

Countering the lethality in diving

Looking to recreational and technical diving, the lethality should be easily identifiable by individuals, teams and instructors. However, the marketing by some training agencies and equipment manufacturers often hides this lethality. Fundamentally, it is not a good sales pitch when a dive shop employee or equipment sales representative says to a prospective client: “You do know if you get this wrong, you could end up dead?”

Sales and marketing are about selling benefits, not products. We sell the benefit of diving as experiences based on seeing, exploring, videoing and photographing wrecks, sealife, caves or other “things.” Dying is definitely not a benefit!

To compound this problem, the GoPro culture means that we are focused on outcomes (great videos of cave exploration or awesome photos of wrecks and sealife) rather than the process of learning, failure, incidents, accidents and the investment of time. As Captain Chelsey Sullenberger said after his successful ditching of US Airways Flight 1549 (call sign “CACTUS 1549”) on the Hudson River: “For 42 years, I’ve been making small regular deposits in this bank of experience, education and training. On January 15, the balance was sufficient, so I could make a very large withdrawal.”

The “hiding” of the real risks means that we do not easily understand the lethality within the system. The open discussion of near misses and close calls and how human fallibility led to them would help divers understand the real risks present—not just, “I made an error,” but what led to that error developing in the manner it did.

Another way in which this “hiding” takes place was the topic of a recent blog in which the myth or misconception that “rebreathers are trying to kill you” was explored. The authors explained that the rebreather is actually trying to keep you alive. While the argument made is a true statement, no piece of hardware can be “safe” on its own, especially when in the underwater environment.

To create safety, it requires humans to adapt and react to the ever-changing situation and predict what is going to happen next. Most accidents do not happen because of poor decisions based on sound information, rather they happen because of poor perception of the problem and potential outcome, which leads to accidents.

Situational awareness—What? So what? Now what?

Situational awareness is mentioned during a number of diver training programmes, but really only at a cursory level, saying that you need to be aware of your surroundings. However, situational awareness is not just about what is happening now, it is also about creating an accurate prediction of the near future. That model of the future is based on previous experiences, goals and expectations, all taking into account the limitations of human attention. We have a finite capacity to pay attention (7+/-2 active elements), and yet we are often instructed to “pay more attention.”

This approach is a flawed method to improve performance, because if we do not know what was taking the limited attention span from the diver, how do we ensure that the attention is pointing at the important and/or relevant topic at the right time?

Our ability to focus on key critical aspects of our surroundings is thanks to a part of the brain called the reticular activating system (RAS). Over the course of our lives, through learning, experience and deliberation, we have developed an intuitive sense of what we need to know, versus what is not critical; the RAS keeps this list in order. The RAS processes all the things we encounter against four categories: things that are Dangerous, Interesting, Pleasurable and Important (DIPI).

If we encounter something that falls into the DIPI categories, we are hardwired to pay attention to it. For example, most of us will respond if we hear our name called in a crowded room (Important) or hissing gas from a cylinder (Important and potentially dangerous). DIPI things get our attention, and by paying attention, we can respond in the appropriate way. Anything that does not fit into DIPI will not demand our attention, which means we will not really think about it, which lessens the likelihood that we will react to it. In diving, we are often operating in a new environment, so the criticality or importance of information might not be well developed, and so we miss it.

Example of situational awareness in action

Improvement in situational awareness comes about by clearly defining what is important at the briefing stage, e.g. 50 bar is the end dive gas pressure and that this should happen at around 30 minutes into the dive. To keep track of that, at 10 minutes, the remaining gas should be approximately 150 bar; 20 minutes, 100 bar; and at 30 minutes, 50 bar.

If this is not the case, we should look at what factors could affect that consumption rate. If it is less, are we shallower? Do we have a stronger current, or am I noticeably fitter than before? If it is more, are we deeper? Do we have a stronger current, or do I have a higher level of drag/less efficiency? If we are deeper, what does that mean for the decompression obligation and the associated gas? If we are consuming more, is the end dive pressure of 50 bar still valid if we are planning on a shared-gas ascent?

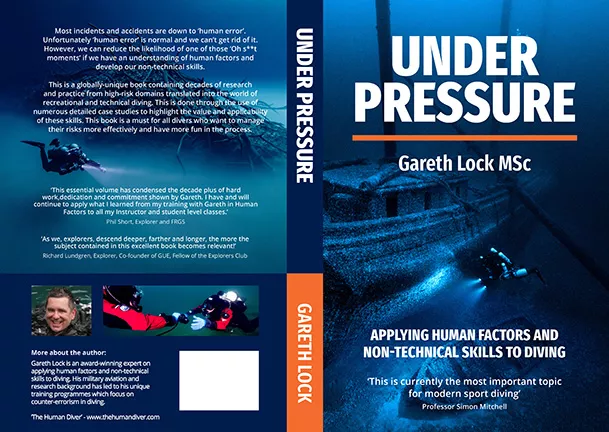

Under pressure: Stories of success and failure

Over the next eight editions of X-Ray Mag, I will be writing a summary of the key themes contained within the book, Under Pressure: Diving Deeper with Human Factors. The book focuses on how divers can apply human factors and non-technical skills to their diving to improve their enjoyment, performance and safety.

It contains more than 30 stories of success and (recovery from) disaster, highlighting the contribution of non-technical skills to divers’ successes.

Contributors include Jill Heinerth, Richard Lundgren, Garry Dallas and Steve Bogaerts and it covers recreational, technical, rebreather, cave and instructional diving, so it is relevant to all divers. It will be published on 12 March 2019 and available from The Human Diver website (Thehumandiver.com), Amazon and Waterstones. The Kindle version will be available late March. ■